dd: The Ultimate Backup Solution

Over the 8 years of my acquaintance with computers valuable data has been lost at an average of twice per annum. I have tried all kinds of solution to help my situation only to fail miserably by forgetting to back up some important bits and pieces of information before upgrading my distro.

Backup solutions can mostly be factored into two approaches of archiving and cloning. If space is limited, you can archive your important data using utilities such as tar. This in fact was the approach I had been using until now. The downside appeared to be lesser accessibility of the files inside the backup. Say, I needed a small text-file from a 200 GB archive. It’d take me around 20 minutes to “get” to its location in the archive.

Which is why, I decided to shift to a newer approach. My laptop has a 320 GB hard disk and I own another 320 GB Western Digital Passport for extra data. To utilize the similitude, I bought another 500 GB Passport, transferred the “extra” data to it and then cloned the entire laptop hard disk to its 320 GB external cousin.

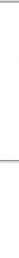

$ dd if=/dev/sda of=/dev/sdb |

That is all. dd‘s performance was questionable, as it took around 15 hours to clone the entire 320 GB. Nevertheless, this time around I was satisfied with the final backup. Not only was it a bit-by-bit replica of my original data but also an accessible repository which I could access easily by plugging in the USB.